Nvidia is on a tear, and it doesn’t seem to have an expiration date.

Nvidia makes the graphics processors, or GPUs, that are needed to build AI applications like ChatGPT. In particular, there’s extreme demand for its highest-end AI chip, the H100, among tech companies right now.

Nvidia’s overall sales grew 171% on an annual basis to $13.51 billion in its second fiscal quarter, which ended July 30, the company announced Wednesday. Not only is it selling a bunch of AI chips, but they’re more profitable, too: The company’s gross margin expanded over 25 percentage points versus the same quarter last year to 71.2% — incredible for a physical product.

Plus, Nvidia said that it sees demand remaining high through next year and said it has secured increase supply, enabling it to increase the number of chips it has on hand to sell in the coming months.

The company’s stock rose more than 6% after hours on the news, adding to its remarkable gain of more than 200% this year so far.

It’s clear from Wednesday’s report that Nvidia is profiting more from the AI boom than any other company.

Nvidia reported an incredible $6.7 billion in net income in the quarter, a 422% increase over the same time last year.

“I think I was high on the Street for next year coming into this report but my numbers have to go way up,” wrote Chaim Siegel, an analyst at Elazar Advisors, in a note after the report. He lifted his price target to $1,600, a “3x move from here,” and said, “I still think my numbers are too conservative.”

He said that price suggests a multiple of 13 times 2024 earnings per share.

Nvidia’s prodigious cashflow contrasts with its top customers, which are spending heavily on AI hardware and building multi-million dollar AI models, but haven’t yet started to see income from the technology.

About half of Nvidia’s data center revenue comes from cloud providers, followed by big internet companies. The growth in Nvidia’s data center business was in “compute,” or AI chips, which grew 195% during the quarter, more than the overall business’s growth of 171%.

Microsoft, which has been a huge customer of Nvidia’s H100 GPUs, both for its Azure cloud and its partnership with OpenAI, has been increasing its capital expenditures to build out its AI servers, and doesn’t expect a positive “revenue signal” until next year.

On the consumer internet front, Meta said it expects to spend as much as $30 billion this year on data centers, and possibly more next year as it works on AI. Nvidia said on Wednesday that Meta was seeing returns in the form of increased engagement.

Some startups have even gone into debt to buy Nvidia GPUs in hopes of renting them out for a profit in the coming months.

On an earnings call with analysts, Nvidia officials gave some perspective about why its data center chips are so profitable.

Nvidia said its software contributes to its margin and that it is selling more complicated products than mere silicon. Nvidia’s AI software, called Cuda, is cited by analysts as the primary reason why customers can’t easily switch to competitors like AMD.

“Our Data Center products include a significant amount of software and complexity which is also helping for gross margins,” Nvidia finance chief Colette Kress said on a call with analysts.

Nvidia is also compiling its technology into expensive and complicated systems like its HGX box, which combines eight H100 GPUs into a single computer. Nvidia boasted on Wednesday that building one of these boxes uses a supply chain of 35,000 parts. HGX boxes can cost around $299,999, according to reports, versus a volume price of between $25,000 and $30,000 for a single H100, according to a recent Raymond James estimate.

Nvidia said that as it ships its coveted H100 GPU out to cloud service providers, they are often opting for the more complete system.

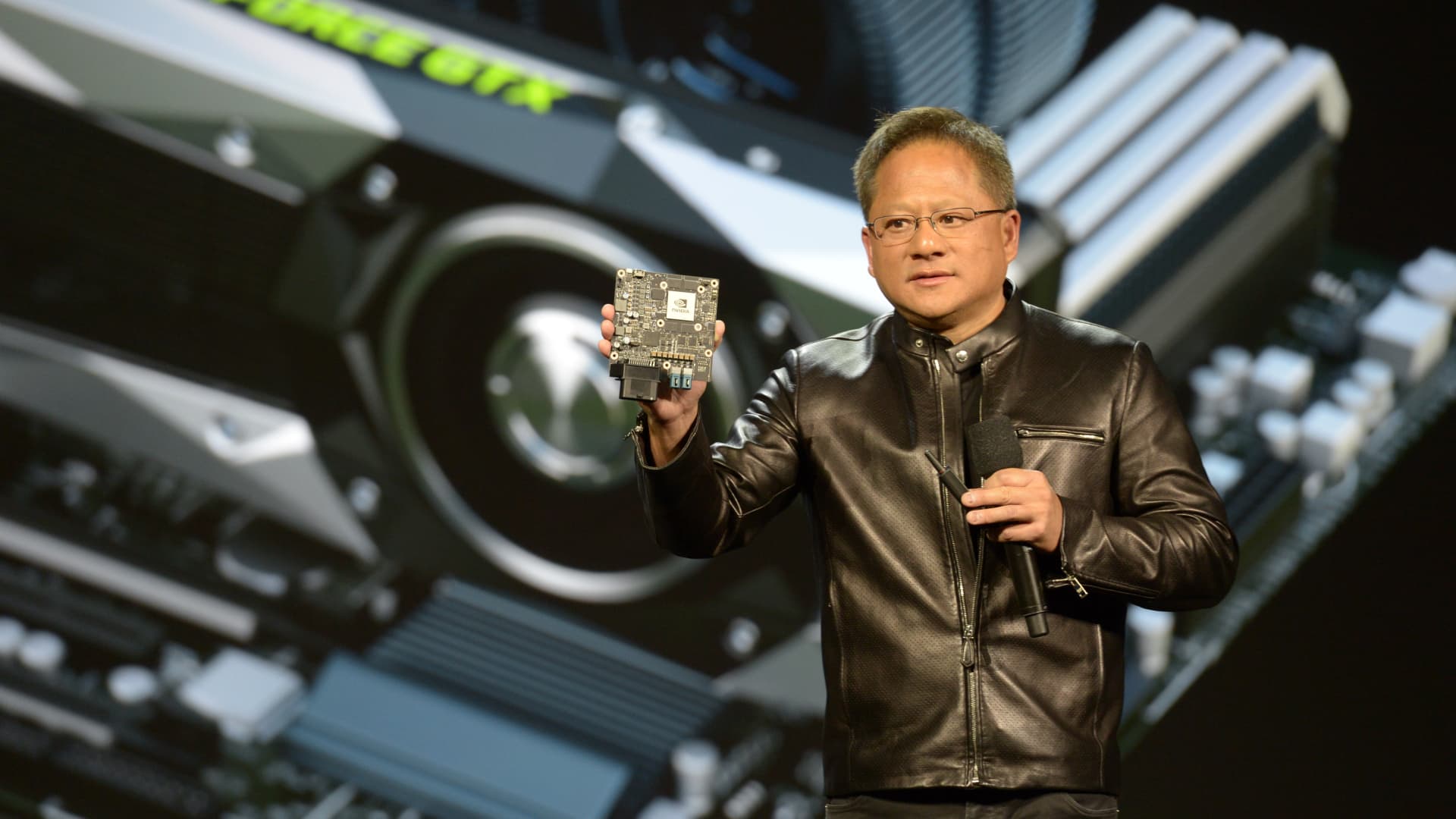

“We call it H100, as if it’s a chip that comes off of a fab, but H100s go out, really, as HGX to the world’s hyperscalers and they’re really quite large system components,” Nvidia CEO Jensen Huang said on a call with analysts.